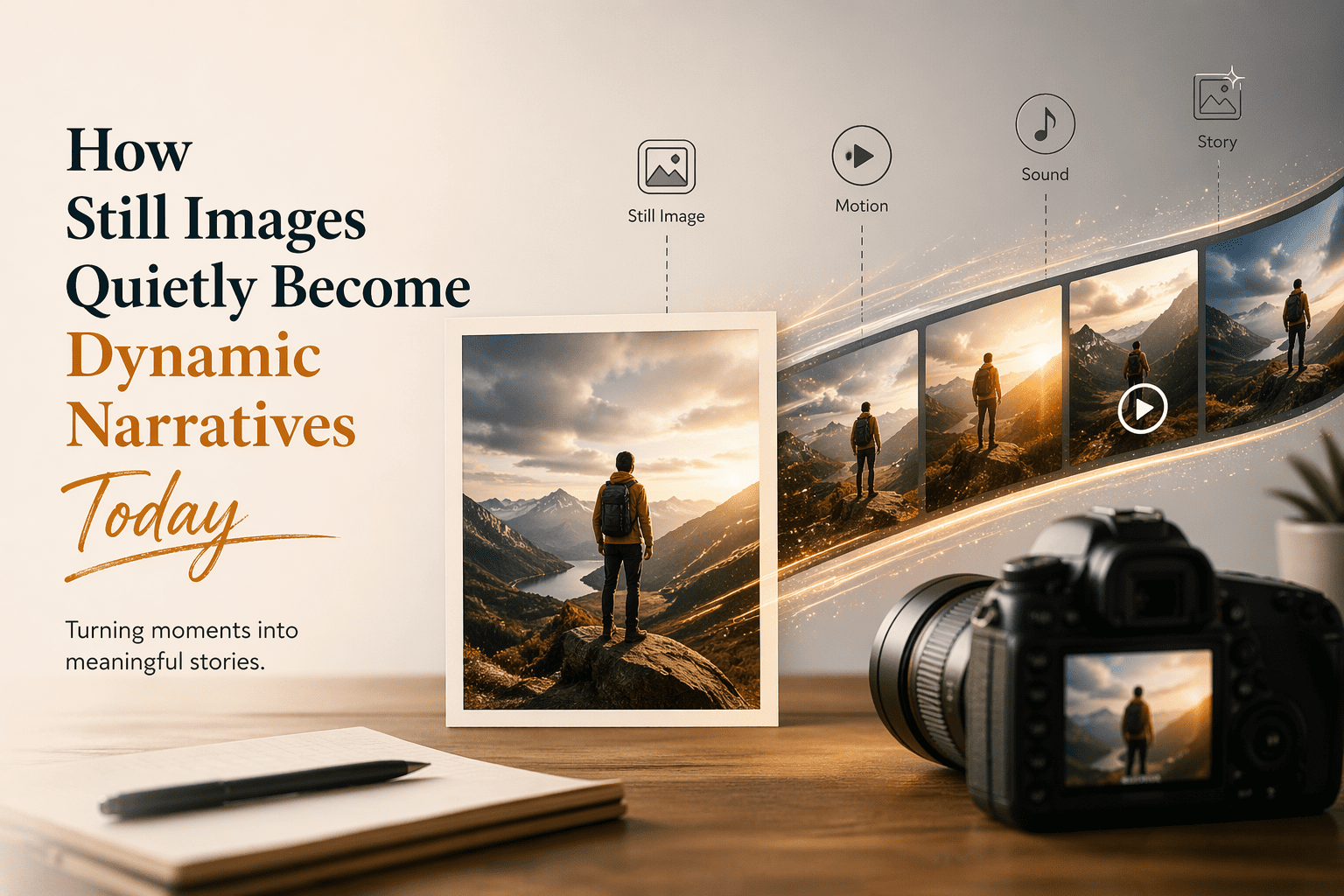

How Still Images Quietly Become Dynamic Narratives Today

- 1 Why Static Images Are No Longer Enough Alone

- 2 The Old Way of Making Videos is Difficult

- 2.1 Creating Content with Purpose

- 2.2 How the Tool Understands What You’re Trying to Say

- 2.3 Structure as Constraints

- 2.4 Model Choice Influences Output Style

- 3 How The Real-World Flow Looks

- 4 Comparing Generative Workflow And Traditional Editing

- 4.1 What Makes Sense Here

- 4.2 Exploration Of Concepts and Testing Ideas

- 4.2.1 The Points of Limitations

- 4.2.2 Reliance On The Prompt

- 4.2.3 Presence Of Motion Gaps

- 4.3 What This Means About Creative Direction

- 4.4 Emergence Of A Different Skill Set

- 4.5 Make Your Video Stand Out: What Photo To Video Says About A Larger Transition

- 4.6 The Implication for Future Workflows

- 4.7 Widening The Narrative: What This Transformation Really Means

- 4.8 The Psychological Transformation (Change) In Creative Science

- 4.9 Speed Changes Decision-Making

- 4.10 New Forms Of Creative Literacy

- 4.11 Breaking Down The Barrier Between Image And Video

- 4.12 Where Traditional Tools Still Matter

- 4.13 The Democratization Of Motion Content

- 5 Final Reflection

Most plans appear relatively clear at one stage or another during the creative workflow, but implementation starts to seem overly complicated from your perspective. There’s a degree of mood to it, you can see it in your mind’s eye, maybe even some movement — but creating that on video has always meant snipping at edges with editing software and shuffling between timelines of compatibility. When doing Image to Video AI, the new paradigm I could reflect on is that we are hugely shortening the gap between our conception/idea and what we can produce, just in a different way.

You are not constructing motion one step at a time — you are recounting it. But that small pivot holds a massive impact on how visual concepts are created.

Why Static Images Are No Longer Enough Alone

How images fit into digital content has shifted.

Count on Motion As The New Normal

Motion is so far beyond a feature at this point that it is an expectation in most settings. Static compositions present to us more like placeholders than they do completed expressions.

The Old Way of Making Videos is Difficult

Making videos involves a lot of time-consuming processes, like:

- creating timelines and layers

- manually setting keyframes and adding transitions

- managing export settings

- waiting for the video to render

This prompted a deep dive into extremely simplified solutions for the problem.

Creating Content with Purpose

Isn’t it also possible that it is “how do I add movement here?” That said, creators are curious about:

- What should animate

- What the animation should feel like

- How the video should be overall

This is where the software that converts photos into videos comes into play.

How the Tool Understands What You’re Trying to Say

This is not about ‘generative’ artificial intelligence; it is about ‘translative’ artificial intelligence, and that is the part of the story that’s really interesting.

- From Generative AI to Translative AI: A Shift in Function

- Use of Natural Language as Input

The prompts that we used to play around with the system included:

- slow cinematic zoom

- soft moving in an environment

- subtle emotional lighting

expressed as patterns of motion that could ultimately be reproduced by the system. The system has almost become a translator of descriptive language, as if it were the logic hammer of movements

Structure as Constraints

The uploaded image defines structure as constraints

- composition

- subject hierarchy

- spatial balance

And all that is made generally fits within this frame.

Model Choice Influences Output Style

Different models seem to affect:

- realism

- motion smoothness

- stylistic interpretation

We observe no time-period variation in the absence of explicit controls.

How The Real-World Flow Looks

Instead, they keep this official process as minimal as possible.

Generate an output of the input through a three-step process

Step 1: Upload Source Image

Base image with JPEG or PNG

Step 2: Enter Motion Description

Movement, Tone, and Style of Where You Are: Graphic novel description in plain English

Step 3: Write something and wait to respond to it

And moments later, the system processes that request, and a video is created.

This is a simple but restrictive model without any intermediate editing steps.

Comparing Generative Workflow And Traditional Editing

| Aspect | Generative Approach | Traditional Editing |

| Entry Barrier | Low | High |

| Time To Output | Minutes | Hours |

| Control Precision | Medium | High |

| Learning Curve | Minimal | Steep |

| Iteration Speed | Fast | Slow |

The difference lies more in workflow philosophy than capability.

What Makes Sense Here

Contexts of Short-Form Content Creation

Quick turnaround, with multiple variations and low production cost, is appealing for fast-moving platforms.

Exploration Of Concepts and Testing Ideas

For creators, as a semi-deciding option without going fully one side or the other,

- Test several visual variations

- Quick iteration

- Refine with pull-throughs

The Points of Limitations

Generative systems are always going to have some limitations because of the particular external concerns.

Reliance On The Prompt

Use of clear enough intent will lead to good enough results

Presence Of Motion Gaps

There is something a bit fussy and detail oriented kind of about how the moves are:

- Remember, iteration is part of the process

- Collaboration with others is typically a multi-stage process

- And this is with the attributes of contemporary generative models

What This Means About Creative Direction

At high speeds, life is abstract as only a means of moving

Shifting From Tactical Execution To Strategic Consulting

The move is:

- Operating with manual constructions of motion

To

- Enabling the expression of end goals

Emergence Of A Different Skill Set

There is an emphasis on:

- Clarity of communication

- Understanding the visual

- Iterative process

Make Your Video Stand Out: What Photo To Video Says About A Larger Transition

Image animation is not a new thing. What has changed is the directness of how it can be accomplished. Instead of constructing a sequence animation incrementally, Photo to Video systems guess intent and generate motion in one fell swoop.

The Implication for Future Workflows

This does not imply that we will find no more, easier tools and options, rather than:

- Early-stage ideation becomes faster

- Experimentation becomes cheaper

- More creators can participate

In fact, for many, a first draft of any video isn’t even cut; it may well have been generated.

Just that makes the set off creative processes different.

Widening The Narrative: What This Transformation Really Means

It becomes increasingly apparent with each passing day that this shift is not about ‘convenience’ — it is the redefinition of authorship in visual storytelling. You are not in the crafting world as much when you can instead explain away motion, thus the creator role works from technician to directing intent.

This has implications beyond efficiency. It shifts the paradigm for testing ideas in their infancy. Instead of running their ideas through a conceptual filter, the technical feasibility constraint can be avoided altogether, allowing creators to start from pure imagination. It serves as a bridge between an idea inside your mind and what you see visually, preventing some late-stage creative blows that are accompanied by production constraints in the early stages.

The Psychological Transformation (Change) In Creative Science

There is also a more subtle, deeper psychological impact taking place. Editing is, by convention, about eyes on tools vs ideas. Timelines, layers, and keyframes make creators’ attention turn from the essence of their idea.

Intent-driven systems, on the other hand, let creators hang closer to the feel that they are trying to achieve. The interaction becomes more conversational:

- describe

- observe

- adjust

- regenerate

This circle is more sculpting than driving to tell software what to do.

Speed Changes Decision-Making

Making decisions becomes a different game altogether when the iterations are measured in minutes instead of hours. And as the cost of failure is low enough, creators are willing to experiment. This encourages exploration over perfection.

In classic workflows, every modification has a time penalty that encourages conservative decisions. Generative workflows move from lack to plenty. Different variants can cohabit successfully, and the “best” one can be selected by results instead of predictions or expectations.

New Forms Of Creative Literacy

In this, yes, might be another kind of literacy emerging as movement/sources narratives become widely used: one that at some certain quality of precision from the power to matter in motion and state convey.

This includes:

- Understand how language relates to visual behavior

- Having identified which descriptors yield reliable outcomes

- Seeing how minor changes in wording change the output

In many ways, prompt writing becomes a form of creative craft that you develop in the same way as directing or storyboarding. The precise versus exact strikes out as much, if not more, because of the 1st encounter with modifying and its aftermath.

Breaking Down The Barrier Between Image And Video

The most consequential of these corollaries, however, is the elimination of the in-between non- and moving image. Images had become static endpoints — not anymore, they are now the starting point.

And so all of a sudden, you take a photo that relates to cycling and maybe tentatively grows into time and becomes a shot, in the absence of the production pipeline. This also enhances the reusability, versatility, and narrative power of each image.

And this all could eventually change how images are literally captured in the first place. In fact, creators might write photos for motion, envisioning ways that they could later be animated through generative systems.

Where Traditional Tools Still Matter

These trends do not signify an ending point for traditional software tools. They are becoming more and more nakedly, delicately specialized instead.

They remain essential for:

- precise control over complex scenes

- long-form narrative consistency

- detailed post-production adjustments

- professional-grade refinement

Where generative tools stand out is in early ideation and rapid production. The future will certainly be a hybrid workflow — leveraging generated outputs but polishing them through traditional means as necessary.

The Democratization Of Motion Content

Maybe the access impact is one of the highest. There has always been a technical skill involved in making video, and unless you devote considerable time to learning that skill set, getting something looking really good takes a long time. That lowered barrier lets so many more people into visual storytelling.

Not all content will be better, but we can share fewer points of view. Visual stories will change (arguably), availability of those stories will expand due to a larger set of producers.

Final Reflection

An initial technical shortcut that turned into a creative framing? Such a spectrum, as of this possible transmutation from frozen image to its animated description: not simply a trick, but a transit on the border between mind and medium.

These tools are still works in progress, and limitations continue to be apparent. But the arc is becoming obvious: creativity is moving away from building every detail and right next, where are we placing systems, in a bubble of intention.

And building word images in that shift is less of mechanics and more about concepts, fluid, and maybe even a little closer to the way ideas themselves are made.