Top 7 NLP Techniques that will transform your life

- 1 Top 7 NLP Techniques That Will Transform Your Life

Machines that can imitate the functioning and capacities of the human mind have long been a goal of Artificial Intelligence. Language is regarded as one of the most significant human achievements that have propelled human development. As a result, it is not surprising that much effort is being made to incorporate language into the field of artificial intelligence in the form of Natural Language Processing (NLP). Today, we can see the effort realized in the likes of Alexa, Bing, and Siri. Unstructured data in the form of images, text, music, and video has increased in recent years.

Top 7 NLP Techniques That Will Transform Your Life

In this particular article, we shall look at the Top 7 NLP Techniques that will transform your life and make you an expert in the field of Natural Language Processing. So, let’s begin our journey:

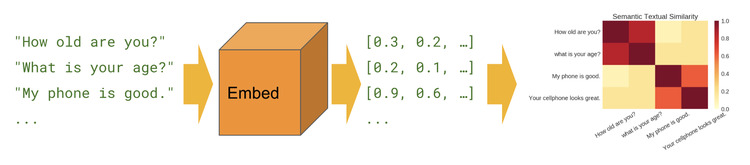

NLP Technique 1: Word Embeddings

1st one from the Top 7 NLP Techniques. To construct any model in deep learning or machine learning, the dataset available to us must be in numerical or vector forms since models fail to understand standard text or picture information in the raw form as people do. So, in order to solve this issue, NLP comes into the picture as it provides us with practical approaches to change the content information into mathematical information, which is known as vectorization or, more precisely, word embeddings. The traditional NLP technique considered words as discrete symbols represented by a one-hot vector. Since there was no similarity for one-hot vectors, this technique also caused a huge problem. Subsequently, the option is to figure out how to encode comparability in the actual vectors. The central thought is that a word’s significance is given by the words that, as often as possible, show up nearby.

Word or text embedding is the only way by which we can change our data to a numerical form. Further, these mathematical vectors are utilized to fabricate different machine-learning models. Word embeddings are viewed as an extraordinary beginning stage for most profound NLP undertakings. They permit profound figuring out how to be viable on more modest datasets, as they are frequently the primary contributions to a profound learning design and the most mainstream method of move learning in NLP.

The most popular and simple word embedding methods used by NLP developers for vector representation of strings include Word2Vec, Poincare Embeddings, Bag of words, Sense2Vec, Skip-Thought, Glove Embedding, TF-IDF, Adaptive skip-gram, Fastext and Embeddings for Language models.

If you are a beginner who is just learning NLP and diving into Word Embeddings, then Bag of Words is the best choice for you to start with. It is simply an approach to preprocessing a text by converting it into a number format. Implementing Bag of Words in Python is an important aspect you should process to understand and learn further to build a career in this field.

NLP Technique 2: Sentiment Analysis

2nd one of the Top 7 NLP Techniques. In Natural Language Processing, Sentiment Analysis or opinion mining is a technique that determines whether the input data is positive, neutral, or harmful by training itself on a massive amount of predefined datasets. This approach is mainly performed on textual data because it helps the business sector monitor and maintain their product and brand name by understanding customer feedback automatically in the form of survey responses and social media conversations and tailoring their products to fulfill their needs. For instance, suppose you collect 3000+ reviews from your customers who are using your particular beauty product. These reviews will turn out to be beneficial in understanding whether the customers are satisfied with your customer service and market value.

Depending on our customer category and product, we can easily define our model category to meet our analysis needs. Most Sentiment analysis models focus on polarity analysis, i.e., very positive, optimistic, neutral, harmful, or very negative. Some others are about emotions and feelings such as excitement, happiness, anger, sadness, etc., urgency as urgent or not urgent, and even understanding intentions as interested or not interested. The types of Sentiment Analysis include fine-grained sentiment analysis, Emotion detection, Aspect-based sentiment analysis, and multilingual sentiment analysis. Sentiment analysis is quite an essential aspect in today’s world because it has the following benefits: Real-time analysis, consistent criteria, and large-scale data sorting.

NLP Technique 3: Machine Translation

3rd one from the Top 7 NLP Techniques. Imagine a situation where you would feel like you are conversing with somebody and the person does not understand your language. Indeed, it is really an awful situation. Machine translation will help you to handle such situations smoothly because it translates one natural language to another language without any human intervention. Experts have been trying to develop it more for a long time because machine translation can translate large volumes of data in a shorter time frame. The majority of us were introduced to this concept when Google Translate came to our fingertips.

Machine translators initially examine the construction of the source language sentence, then separate every single word or short articulation that can be effortlessly deciphered. Finally, they reproduce those single words or short articulations utilizing the same construction in the picked target language. Some of the real-time applications of Machine Translation include text translation and speech translation, which are widely used in the business and travel industry.

There are mainly four types of machine translation in NLP, namely:

Statistical Machine Translation, or SMT, works by suggesting factual or statistical models that rely upon the examination of colossal volumes of bilingual sentences.

Hybrid Machine Translation, or HMT, uses a translation memory

Rule-based Machine Translation, or RBMT, translates the basics of grammatical rules.

Neural Machine Translation, or NMT, which depends upon neural network models

NLP Technique 4: Chatbots

4th one from the Top 7 NLP Techniques. A chatbot, commonly known as a bot, is computer software that mimics real-life human communication. Users converse with a chatbot using a chat interface such as messages, email, or voice, just as they would solve their queries with a human. Chatbots process and understand the user’s words or phrases and respond with a pre-programmed response. They are commonly found on social media platforms such as WeChat, WhatsApp, Facebook Messenger, Skype, Line, Slack, and Kik, and we can even implement them on our websites or apps.

Natural Language Understanding (NLU) and Natural Language Generation (NLG) are the two processes involved in how NLP works in chatbots. The capacity of a chatbot to comprehend a person is referred to as natural language understanding (NLU). It is the process of turning language into structured data that a machine can understand. NLG converts structured data to text.

There are three sorts of chatbots:

Chatbots that follow a set of rules or Rule-based chatbots: This is the most basic form of chatbot available today. People engage with these bots by clicking on buttons and selecting pre-programmed alternatives. These chatbots require consumers to make a few choices in order to provide appropriate replies. As a result, these bots have the longest user journeys but turn out to be the slowest in assisting customers with their goals. When it comes to qualifying leads, these bots are fantastic. The chatbot asks questions, and users respond by pressing buttons. The bot evaluates the collected data and responds. However, for more complex circumstances with several variables or causes, chatbots aren’t always the ideal answer.

Chatbots that are intellectually autonomous or intellectually independent: These chatbots employ Machine Learning (ML), which assists the chatbot in learning from the user’s inputs and requests. Intelligent chatbots are taught to recognize specific keywords and phrases that prompt the bot to respond. They train themselves to understand even more queries over time. They can be said to learn and train via experience. For example, you may tell a chatbot, “I have forgotten the password of my account.” The bot would recognize the terms “forgotten,” “password,” and “account” and would respond with a pre-programmed response based on these keywords.

Chatbots driven by artificial intelligence or AI-powered chatbots: AI-powered bots combine the advantages of rule-based and cognitively autonomous bots. Artificial Intelligence is a computer simulation of human intelligence, and so AI-powered chatbots comprehend natural language. Still, they also follow a preset path to ensure they address the user’s problem. They can recall the conversation’s context as well as the user’s preferences. When necessary, these chatbots may bounce from one point of discussion situation to another and handle random user requests at any time. To comprehend humans, these chatbots employ Deep Learning, Artificial Intelligence, Machine Learning, and Natural Language Processing (NLP).

NLP Technique 5: Question Answering

5th one from the Best NLP Techniques. Question Answering is a computer science subject related to information retrieval and natural language processing (NLP) that focuses on developing systems that automatically respond to queries given by humans in natural language. A question-answering application, often a computer program, may generate its responses by querying a structured database of knowledge or information, sometimes referred to as a knowledge base. More typically, question-answering systems can elicit reactions from an unstructured collection of natural language texts.

Question answering is heavily reliant on a solid search corpus since without papers holding the solution, no question-answering system can achieve anything. QA systems are technologies that offer the correct short response to a question rather than a list of alternative replies. In this situation, QA systems are meant to detect text similarity and respond to questions presented in natural language. However, some people use visuals to find answers to questions. For instance, when you tap on picture boxes to demonstrate that you are not a robot, you are actually educating clever algorithms about what is in a particular image.

All of this is feasible as a result of NLP technologies such as BERT – Google’s Bidirectional Encoder Representations from Transformers. Anyone interested in developing a QA system may use NLP to train machine learning models to answer domain-specific or generic queries. There are several datasets and tools available online, allowing you to immediately begin training clever algorithms to learn and interpret vast amounts of human language data. NLP algorithms also utilize inference and probability to estimate the correct response, which increases efficiency and accuracy. The advantage of adopting QA systems for organizations is that they are incredibly user-friendly. Anyone can utilize the corporate QA system once it is developed. In fact, if you’ve ever talked to Alexa or used Google Translate, you’ve seen NLP in action.

NLP Technique 6: Text Summarization

6th one of the best NLP Techniques. Text Summarization is one of the Natural Language Processing (NLP) applications that will undoubtedly have a significant influence on our lives. With the proliferation of digital media and ever-increasing publications, who has the time to read full articles/documents/books to determine whether they are beneficial or not? Fortunately, this technology is already available. Automatic Text Summarization is one of the most challenging and intriguing issues in Natural Language Processing (NLP). It is the process of creating a concise and meaningful summary of text from a variety of text sources, including books, news stories, blog posts, journal articles, emails, and tweets. The existence of enormous volumes of textual data is driving the demand for automatic text summarization systems.

Text summarizing is categorized into two types.

Text Extraction and Summarization: This is the conventional approach that was initially devised. The basic goal is to select and include the text’s important sentences in the summary. It is important to notice that the summary produced comprises precise phrases from the original text.

Summarization of Abstractive Text: This is a more advanced approach, with many improvements occurring regularly. The strategy is to find the essential portions, analyze the context, and recreate them in a fresh way. This guarantees that the most essential information is given in the minimum amount of text feasible. It is worth noting that the sentences in the summary are produced rather than retrieved from the original text.

NLP Technique 7: Attention Mechanism

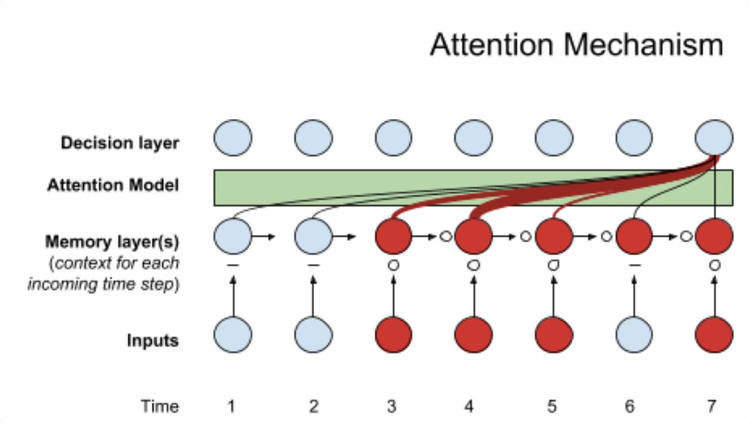

7th from the best NLP Techniques. It is a new development in Deep Learning, particularly for Natural language processing applications such as image processing, machine translation, dialogue conversation creation, and so on. It is a method designed to improve the performance of the encoder-decoder, i.e., the seq2seq RNN model. Attention is offered as a resolution to the Encoder-Decoder model’s restriction of encoding the input sequence to a single fixed-length vector from which to decode the output at each time step. This is thought to be a difficulty when decoding long sequences since it makes it hard for the neural network to manage long phrases, particularly those that are longer than in the training data. When attempting to forecast the following word, the model looks for a collection of places in a source phrase where the most critical information is concentrated.

The algorithm then predicts the next word using context vectors associated with these source positions as well as all previously produced target words. Rather than encoding the input data into a single fixed context vector, the attention model creates a context vector that is tailored to each output time step. The core principle behind Attention is that when the model attempts to predict an output word, it only uses sections of the input where the most critical information is focused rather than a whole phrase.

The Attention mechanism is a precious method in NLP tests since it improves accuracy and blue scores and works well with extended texts. The only drawback of the Attention method is that it is time-intensive and difficult to parallelize.