Transforming Professional Cinematic Workflows With Seedance 2.0 Video Generation

- 1 Redefining Narrative Coherence In Artificial Intelligence Content Creation

- 1.1 Mastering Character Consistency Across Complex Scene Transitions

- 1.2 Integrating Native Audio Synthesis For Cinematic Immersion

- 1.3 Pushing Temporal Boundaries With Extended Sequence Generation

- 2 Executing The Official Four-Step Video Production Lifecycle

- 2.1 Drafting Precise Directorial Prompts And Visual References

- 2.2 Configuring Technical Parameters For Target Media Platforms

- 2.3 Processing Multimodal Data Through Advanced AI Architecture

- 2.4 Reviewing And Exporting Watermark-Free Production Assets

- 3 Evaluating Architectural Advantages Against Conventional Generation Models

The landscape of digital visual storytelling consistently demands immense resources, forcing creators to balance artistic ambition against harsh budget realities. Independent studios, marketing agencies, and educational content producers often find themselves compromising on visual fidelity or narrative complexity due to the prohibitive costs of practical shooting and exhaustive post-production editing. While early automated generative systems promised a new era of relief, they frequently introduced entirely new frustrations: characters morphing randomly between camera cuts, disjointed temporal flow that ruined viewer immersion, and a hollow silence requiring completely separate sound design workflows.

Addressing these specific operational bottlenecks, Seedance 2.0 emerges as a sophisticated multimodal architecture designed to bridge the gap between text concepts and broadcast-ready deliverables. By prioritizing spatial-temporal logic and native sensory integration, this technological framework provides a more reliable foundation for professionals seeking to scale their visual output without sacrificing narrative integrity.

Redefining Narrative Coherence In Artificial Intelligence Content Creation

The primary challenge in automated video rendering has historically been maintaining a unified creative vision over extended periods. This specific model addresses the core issue of visual instability that frequently plagues fragmented generation systems, introducing stability to complex scene compositions.

Mastering Character Consistency Across Complex Scene Transitions

In my observations of the platform, the underlying diffusion transformer demonstrates a highly robust capacity for spatial memory and geometric tracking. Instead of treating each generated second as an isolated painting, the architecture actively tracks facial geometry, clothing textures, and lighting environments across multiple simulated camera angles. This means a protagonist introduced in a wide establishing shot remains entirely recognizable in an extreme close-up later in the sequence. This capability allows directors to build authentic emotional connections through multi-shot storytelling rather than relying on rapid, disconnected montages.

Integrating Native Audio Synthesis For Cinematic Immersion

The most disruptive aspect of this technology is arguably its parallel multimodal processing approach to sound. The system does not merely render silent moving pixels; it simultaneously calculates and applies environmental acoustic properties. As the visual data takes shape, the underlying model generates synchronized ambient sounds and corresponding physical interaction audio that match the on-screen events. This holistic approach drastically reduces the operational friction of sourcing external royalty-free audio libraries and manually aligning sound waves to visual cues in a secondary software environment.

Pushing Temporal Boundaries With Extended Sequence Generation

Strict short-form limitations have historically restricted generative visual tools to creating glorified moving wallpapers rather than actual films. Through advanced sequence expansion techniques, this model supports generating unified narratives spanning up to a full minute in duration. This extended temporal capacity allows for the development of proper story arcs, dynamic pacing variations, and detailed product demonstrations that require actual breathing room to impact the audience effectively.

Balancing High Resolution Output With Generation Efficiency

Despite the massive computational load required to maintain temporal consistency and audio synchronization, the rendering pipeline handles up to ultra-high-definition resolution with surprising efficiency. Producing professional-grade textures and natural color gradients, the system delivers visually dense frames suitable for large-format displays. Furthermore, it manages to keep processing times manageable on modern hardware infrastructure, rendering high-definition segments in a matter of seconds rather than hours.

Executing The Official Four-Step Video Production Lifecycle

Transitioning from a blank conceptual canvas to a completed multimedia asset requires a highly structured approach. The official operational pathway is logically divided into four distinct phases to maximize user control.

Drafting Precise Directorial Prompts And Visual References

The initial stage requires the creator to articulate their creative vision with extreme structural clarity. Users input detailed textual descriptions or upload structural reference images to guide the system. The model responds best to comprehensive directorial instructions detailing camera movements, atmospheric lighting conditions, and specific actor blocking. A built-in language processor carefully decodes these complex linguistic inputs to establish the foundational visual blueprint.

Configuring Technical Parameters For Target Media Platforms

Before the rendering protocol commences, operators must define the technical boundaries of their specific project. This involves selecting the appropriate aspect ratio, ranging from vertical formats optimized for mobile devices to traditional cinematic widescreen dimensions. Users also determine the target output resolution and the specific duration required for their chosen distribution channel, ensuring the final file perfectly meets exact technical delivery specifications.

Processing Multimodal Data Through Advanced AI Architecture

During the third operational phase, the creator steps back as the computational engine takes full control of the production. The system processes the spatial and temporal data streams simultaneously, handling the intricate physics simulations and lighting calculations required for realism. Concurrently, the audio generation module synthesizes the synchronized soundscape, weaving the environmental noise and physical feedback directly into the visual timeline without requiring human intervention.

Reviewing And Exporting Watermark-Free Production Assets

The final operational step involves vital quality assurance and subsequent digital distribution. Creators preview the fully rendered multimedia file directly within the browser interface, assessing both visual continuity and audio synchronization accuracy. Once approved, the production-ready file can be downloaded instantly without any restrictive watermarks. The asset is then ready for immediate social distribution or seamless integration into advanced non-linear editing suites for final color grading.

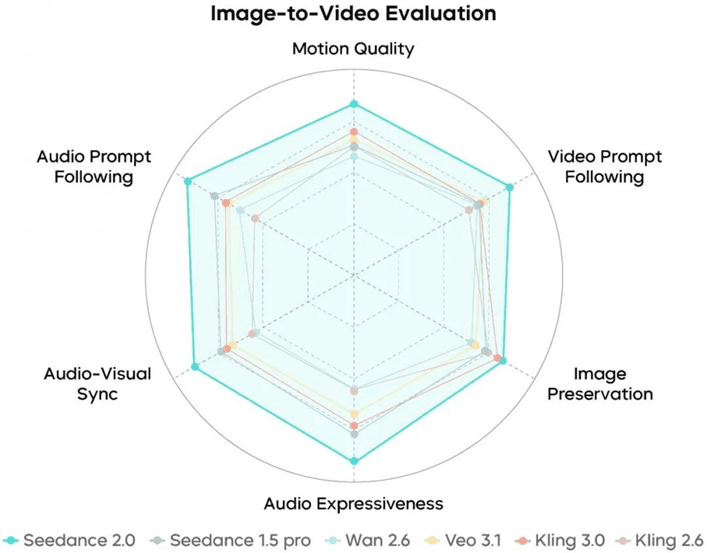

Evaluating Architectural Advantages Against Conventional Generation Models

To truly understand the practical shifts in modern workflow efficiency, we must examine how this integrated approach contrasts with traditional decentralized visual generation methods utilized in the past.

| Technical Assessment Area | Conventional Decentralized Video Generators | Seedance 2.0 Integrated Architecture |

| Narrative Time Constraints | Restricted to brief clips lacking story progression | Supports extended sequences for complete narrative arcs |

| Sensory Output Modalities | Strictly limited to silent visual pixel generation | Delivers synchronized environmental audio alongside visuals |

| Subject Identity Retention | High probability of severe texture and identity drift | Maintains stable character geometry across varied angles |

| Visual Output Fidelity | Often degraded by compression artifacts and noise | Preserves intricate details through high definition rendering |

Navigating Prompt Dependency And Minor Generation Artifacts

While the architectural advancements are undeniably substantial, my technical observations indicate that the system is not entirely immune to the inherent unpredictability of generative computations. The final output quality remains heavily tethered to the linguistic precision and logical structure of the user prompt. Ambiguous phrasing can easily lead the internal language model astray, resulting in physically illogical environments or distorted anatomical features. Furthermore, achieving an exact, highly specific micro-interaction between two complex subjects may still require multiple regeneration cycles. Recognizing these boundaries ensures the tool is utilized effectively as a powerful visual drafting mechanism rather than a flawless final rendering engine that completely replaces human oversight.