How Still Images Quietly Become Dynamic Narratives Today

- 1 Why Static Images No Longer Feel Complete Alone

- 1.1 Motion As A New Baseline Expectation

- 1.2 Traditional Production Still Has Friction

- 1.3 The Shift Toward Intent-Driven Creation

- 2 How The System Interprets Creative Intent

- 2.1 From Language To Visual Motion Behavior

- 2.2 Image as a Structural Constraint

- 2.3 Model Choice Influences Output Style

- 3 What The Actual Workflow Looks Like

- 3.1 Three-Step Generation Process From Input To Output

- 3.1.1 Step 1: Upload Source Image

- 3.1.2 Step 2: Enter Motion Description

- 3.1.3 Step: Generate And Wait For Output

- 3.2 Comparing Generative Workflow And Traditional Editing

- 3.3 Where This Approach Feels Most Effective

- 3.4 Concept Exploration And Idea Testing

- 3.5 Personal Visual Storytelling Scenarios

- 3.6 Where Limitations Become Visible

- 3.6.1 Dependence On Prompt Clarity

- 3.6.2 Occasional Motion Imperfections

- 3.6.3 Iteration As Part Of The Process

- 3.7 What This Signals About Creative Direction

- 3.8 How Photo To Video Reflects A Larger Shift

- 4 What This Means For Future Workflows

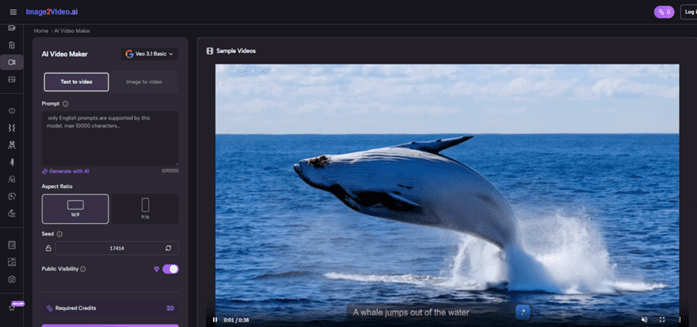

There is a moment in most creative workflows where an idea feels clear, but the path to execution feels unnecessarily complicated. You might have a strong visual, a clear mood, even a sense of motion—but translating that into video traditionally requires editing software, timelines, and technical fluency. What I noticed while experimenting with Image to Video AI is that the gap between imagination and output is narrowing in a very different way.

Instead of constructing motion step by step, you describe it. And that small shift changes how visual ideas take shape.

Why Static Images No Longer Feel Complete Alone

The role of images in digital content has evolved.

Motion As A New Baseline Expectation

On most platforms today, motion is not an enhancement—it is expected. Static visuals often feel like placeholders rather than finished expressions.

Traditional Production Still Has Friction

Even with modern tools, video creation involves:

- timelines and layers

- keyframes and transitions

- export settings and rendering

These steps create a barrier, especially for early-stage ideas.

The Shift Toward Intent-Driven Creation

Instead of asking “how do I animate this?” creators are increasingly asking:

– What should move

– how should it feel

– What emotional tone should it carry

This is where generative systems such as Photo to Video begin to matter.

How The System Interprets Creative Intent

The interesting part is not just generation, but translation.

From Language To Visual Motion Behavior

Natural Language As Input Layer

In my testing, prompts such as:

- slow cinematic zoom

- soft environmental motion

- subtle emotional lighting

seem to translate into distinct motion patterns. The system appears to map descriptive language into movement logic.

Image as a Structural Constraint

The uploaded image defines:

- composition

- subject hierarchy

- spatial balance

Everything generated remains anchored to this structure.

Model Choice Influences Output Style

Different models seem to affect:

- realism

- motion smoothness

- stylistic interpretation

Even without explicit controls, the variation is noticeable.

What The Actual Workflow Looks Like

The official process is intentionally minimal.

Three-Step Generation Process From Input To Output

Step 1: Upload Source Image

Provide a JPEG or PNG as the base visual.

Step 2: Enter Motion Description

Describe movement, tone, and style using natural language.

Step: Generate And Wait For Output

The system processes the request and produces a video after a short delay.

There are no intermediate editing steps, which simplifies and limits.

Comparing Generative Workflow And Traditional Editing

| Aspect | Generative Approach | Traditional Editing |

| Entry Barrier | Low | High |

| Time To Output | Minutes | Hours |

| Control Precision | Medium | High |

| Learning Curve | Minimal | Steep |

| Iteration Speed | Fast | Slow |

The difference lies more in workflow philosophy than capability.

Where This Approach Feels Most Effective

Short Form Content Creation Contexts

Fast-moving platforms benefit from:

- quick turnaround

- multiple variations

- lower production overhead

Concept Exploration And Idea Testing

Instead of committing to one direction, creators can:

- test multiple visual interpretations

- iterate quickly

- refine based on results

Personal Visual Storytelling Scenarios

Turning still images into motion introduces:

- emotional depth

- temporal flow

- narrative continuity

Where Limitations Become Visible

No generative system is without constraints.

Dependence On Prompt Clarity

Results vary depending on how clearly the intent is described.

Occasional Motion Imperfections

In some outputs, small details in motion can feel slightly artificial.

Iteration As Part Of The Process

Achieving a specific outcome often requires multiple generations.

These behaviors are consistent with current generative models.

What This Signals About Creative Direction

The most meaningful change is not speed, but abstraction.

From Technical Execution To Conceptual Direction

Creators are moving from:

- building motion manually

to:

- describing desired outcomes

A Different Skill Emphasis Emerging

The focus shifts toward:

- clarity of expression

- understanding visual language

- iterative refinement

How Photo To Video Reflects A Larger Shift

The concept of transforming photos into motion is not new. What is new is how directly it can now be done.

Instead of constructing animation step by step, the system interprets intent and generates motion in a single pass.

What This Means For Future Workflows

The implication is not that traditional tools disappear, but that:

- Early-stage ideation becomes faster

- Experimentation becomes cheaper

- More creators can participate

For many, the first version of a video may no longer be edited—it may be generated.

And that alone changes how creative processes begin.