AI got it wrong and nobody noticed: real cases and the reliability gap they expose

- 1 Case 1: Three models, one document, three different failure modes

- 2 Case 2: The hallucination bottleneck that high-stakes industries hit first

- 3 What a multi-model architecture actually changes

- 4 Case 3: Document scale and the 29% correction problem

- 5 What to take away before your next high-stakes output

The most expensive AI failures are rarely dramatic. They do not arrive as system crashes or error messages. They arrive as polished, fluent, confident-looking output that happens to be wrong in exactly the ways that matter.

This is the reliability problem that is attracting serious attention across AI tools and their real-world limitations, not whether a model can perform well on average, but whether it can be trusted when the stakes are specific and the margin for error is narrow. In language processing, that question has a well-documented set of answers, and the cases are worth examining in detail.

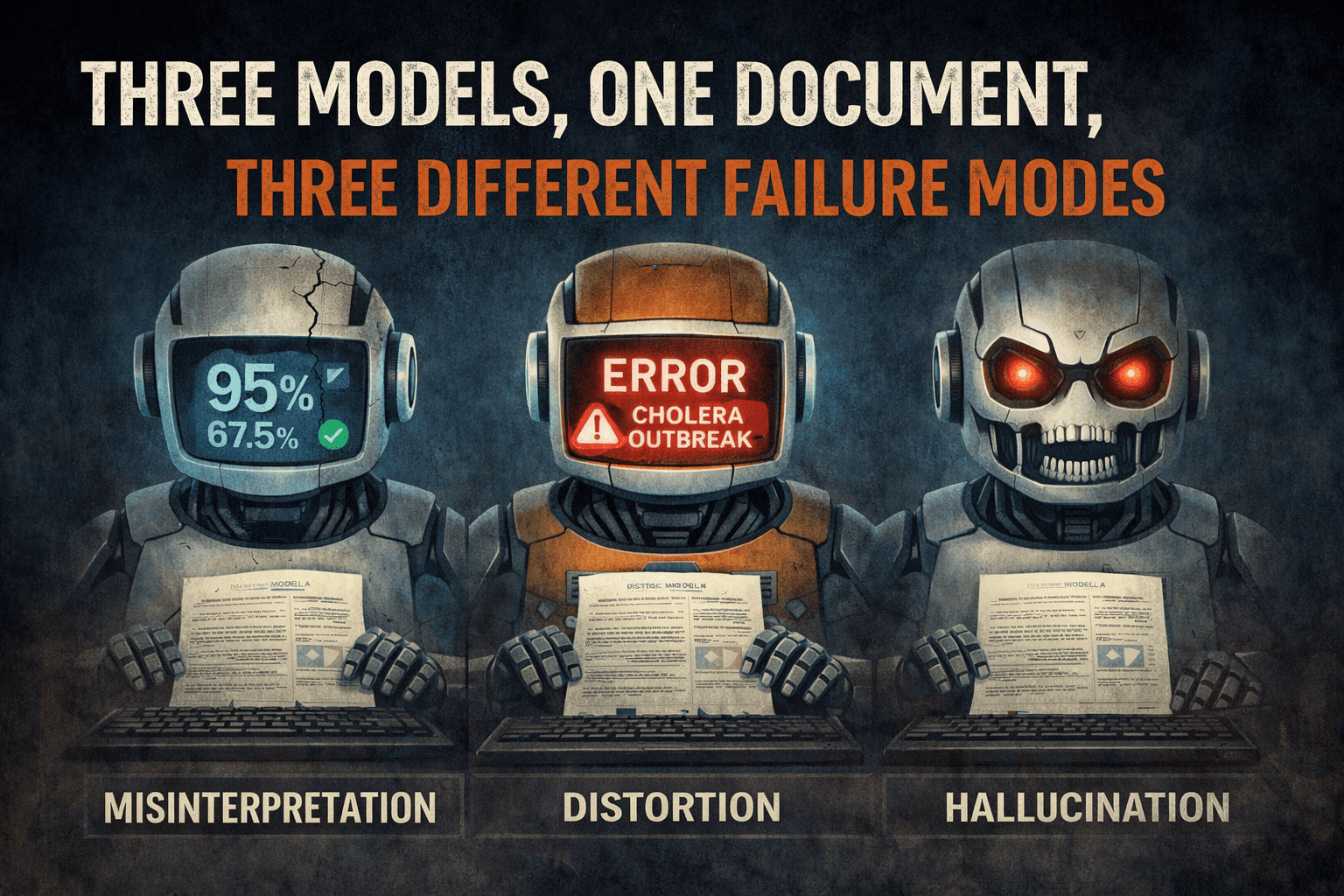

Case 1: Three models, one document, three different failure modes

In an internal test applying three separate top-tier AI language models to a dataset of complex multilingual legal contracts, each model was evaluated independently. What emerged was not a single category of failure but three distinct ones, each model failing in its own way on the same source material.

Model A showed a 12% error rate in handling honorifics specific to Asian language structures. The formality signals embedded in the source text were misread, and the contractual register was distorted in ways that would affect how obligations were interpreted by the receiving party.

Model B hallucinated numerical dates in Romance languages. The output was not visibly broken; it was plausible-sounding text with incorrect figures. In a legal document, a misrendered date is not a stylistic lapse. It is a material inaccuracy with potential legal consequences.

Model C failed to reproduce the formal register required for German corporate filings, defaulting to a tone that would have been structurally inappropriate in that context.

Expert analysis, including published industry reviews of where AI language tools still fall short, consistently identifies these same domains as the ones that expose individual model weaknesses most reliably: specialized legal text, culture-dependent formality registers, and language pairs with limited high-quality training data.

What the three cases share is something more instructive than the individual errors themselves. Each model failed differently. No single model experienced all three failure modes simultaneously. Each had its own bias profile, its own distribution gaps, its own category of blind spot. That is not a software problem to be patched in the next model release. It is an inherent feature of how individual large language models are built and trained.

Case 2: The hallucination bottleneck that high-stakes industries hit first

Enterprise adoption of AI language tools has grown sharply. According to the Lokalise Localization Trends Report (2025), AI language tool use in the finance sector alone grew by 700% between 2023 and 2024. That rate of adoption is significant. So is the reliability ceiling it has exposed.

Individual top-tier large language models hallucinate, that is, generate plausible but factually inaccurate content, at a rate of 10% to 18% during complex language processing tasks, according to data synthesized from Intento’s State of Language Automation 2025 and WMT24 benchmarks.

In most contexts, a 10% error rate is a reasonable cost of automation. In financial disclosures, regulatory filings, or clinical documentation, it is not. A quarterly earnings summary where one language version contains a hallucinated figure does not create an editing problem. It creates a compliance one. The margin for acceptable error in these contexts collapses to near zero, while the volume of content requiring processing only keeps growing.

This is the bottleneck that organizations hit as they move fast on AI language tools without designing for the structural reliability ceiling of individual models. The failure mode was not in the tools themselves. It was on the assumption that a single model, however capable on benchmark tests, could be treated as a verified source of output in high-stakes workflows.

The pattern underneath these cases

These cases are not isolated incidents. They point to a structural characteristic of how single-model AI processing works.

Any given model’s output is, in statistical terms, one sample from one distribution. It can be excellent. It can be subtly wrong. The model itself has no mechanism to distinguish between the two on a given sentence; it produces confident-looking output regardless of whether it has rendered the source accurately. It does not flag its own uncertainty the way a human expert would.

This is why the field of AI reliability engineering, which Tech Behind It has covered in the context of how multi-agent systems reduce single-point failure, has been turning toward architectures where multiple independent models process the same input simultaneously. The core principle comes from fault-tolerant systems design: if multiple independent agents are asked the same question and the majority produce the same output, the probability of all of them sharing the same error is dramatically lower than the probability of any one of them failing alone.

Applied to language tasks, this means comparing outputs across multiple models, identifying where they diverge, and selecting what the majority produce. Hallucinations, by their nature, are model-idiosyncratic. Different models hallucinate differently. A multi-model architecture catches what any single model would have silently passed through.

What a multi-model architecture actually changes

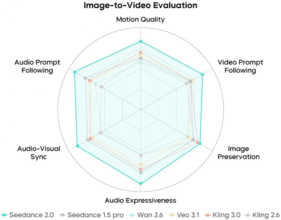

This is the design logic behind MachineTranslation.com, an AI translation tool that runs language processing through 22 models simultaneously, evaluates source context, and selects the output that the majority of models produce. According to internal benchmark data from MachineTranslation.com, the hallucination rate that sits at 10 to 18% for individual models drops to under 2% under this architecture.

That figure is not a marginal improvement. For the use cases described above, legal documents, financial disclosures, and regulated industry content, the difference between a 12% error rate and a sub-2% error rate is the difference between output that requires full human review before use and output that can be acted on with a much higher degree of confidence.

The architecture also allows for a human verification layer as a final escalation step for content where any residual error is unacceptable. The human reviewer in that workflow works from AI-verified output rather than raw single-model output, which changes the nature of the review task fundamentally.

Case 3: Document scale and the 29% correction problem

Scale introduces a compounding dimension that individual model tests do not fully capture.

Among users who processed large documents, over 50 pages, without predefined glossaries or structured workflows, 29% reported needing to correct more than 7% of the output sentences, according to internal benchmark data from MachineTranslation.com. At volume, that correction burden does not feel like an editing task. It feels like redoing the work.

When the same documents were processed through the multi-model architecture, the proportion of users reporting that level of post-edit effort dropped from 29% to 14%. The reduction reflects what happens when systematic error is filtered out at the output stage rather than discovered downstream during review.

For teams operating at scale, that difference has a measurable operational cost. Post-editing at volume is a workflow bottleneck with real consequences for delivery timelines, review cycles, and resource allocation. The cases above illustrate what happens when that workflow depends on a single model, and what changes structurally when it does not.

What to take away before your next high-stakes output

The lesson from these cases is not that AI language tools are unreliable. It is that single-model AI processing carries a structural reliability ceiling that most business workflows eventually hit.

The practical implication is to match the reliability architecture to the stakes. For low-stakes, high-volume content, a single model is often sufficient, and the speed advantage is real. For anything that reaches clients, regulators, courts, patients, or investors, the architecture needs to account for the error profile of individual models, not just their average benchmark performance.

This is the same principle that applies across AI tool selection for real-world workflows: the right tool is not always the most capable one tested in isolation. It is the one whose design matches how failure actually occurs in your specific use case.

The cases above are instructive precisely because the failures were not obvious. They were quiet, plausible, and formatted correctly. They were the kind of errors that pass review until they do not. Understanding the mechanism behind them is the first step toward not repeating them.